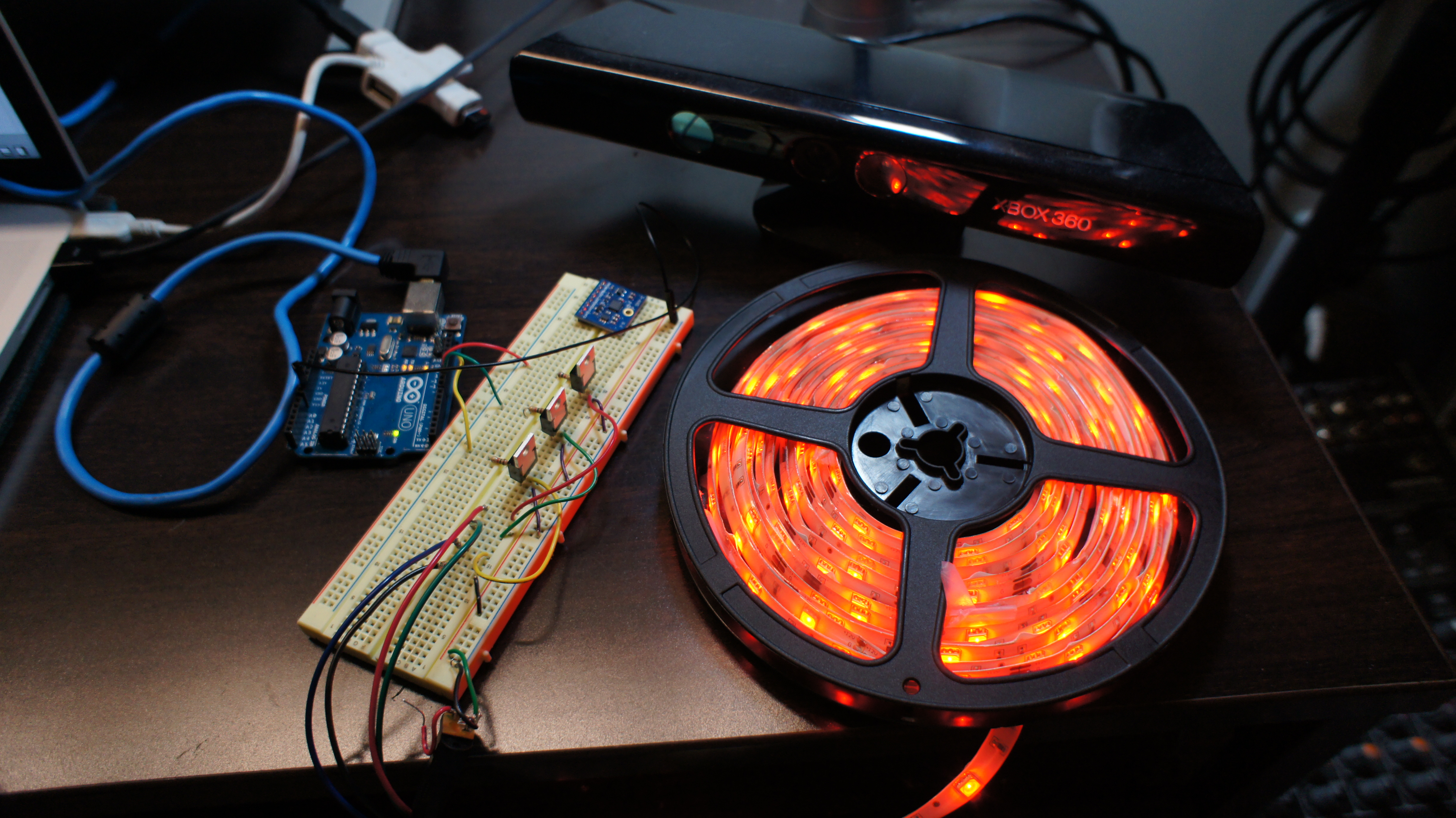

This setup uses Jon Bellona’s SimpleKinect app to extract location information on each of the joints picked up by the Microsoft Kinect. I wrote a max patch to translate this data into scaled values sent to Ableton Live. There was a little bit of playing around and walking around the room before the values were optimum.

The idea is to develop the Kinect into an interface for gestural input for audience members walking around in a room. The movement of listeners can be used to change aspects of the sound, making them “part” of the music. I am particularly interested on the possibilities this brings to stage for “audience interaction”. I wanted to add LED response because the impact of physical gestures changing the sound can be heightened when accompanied by visual feedback. The color of an entire room can change with the moving person and also modulate the sound at the same time.